Toulmin model explained: argument analysis with LLMs

This guide explains the Toulmin model and shows how to apply it with LLMs for argument analysis. It includes a short definition of key parts like claim, grounds, and warrant, then moves into a practical proof-of-concept using Mistral Instruct.

Recently, I came across the Toulmin argument, a method developed by philosopher Stephen E. Toulmin.

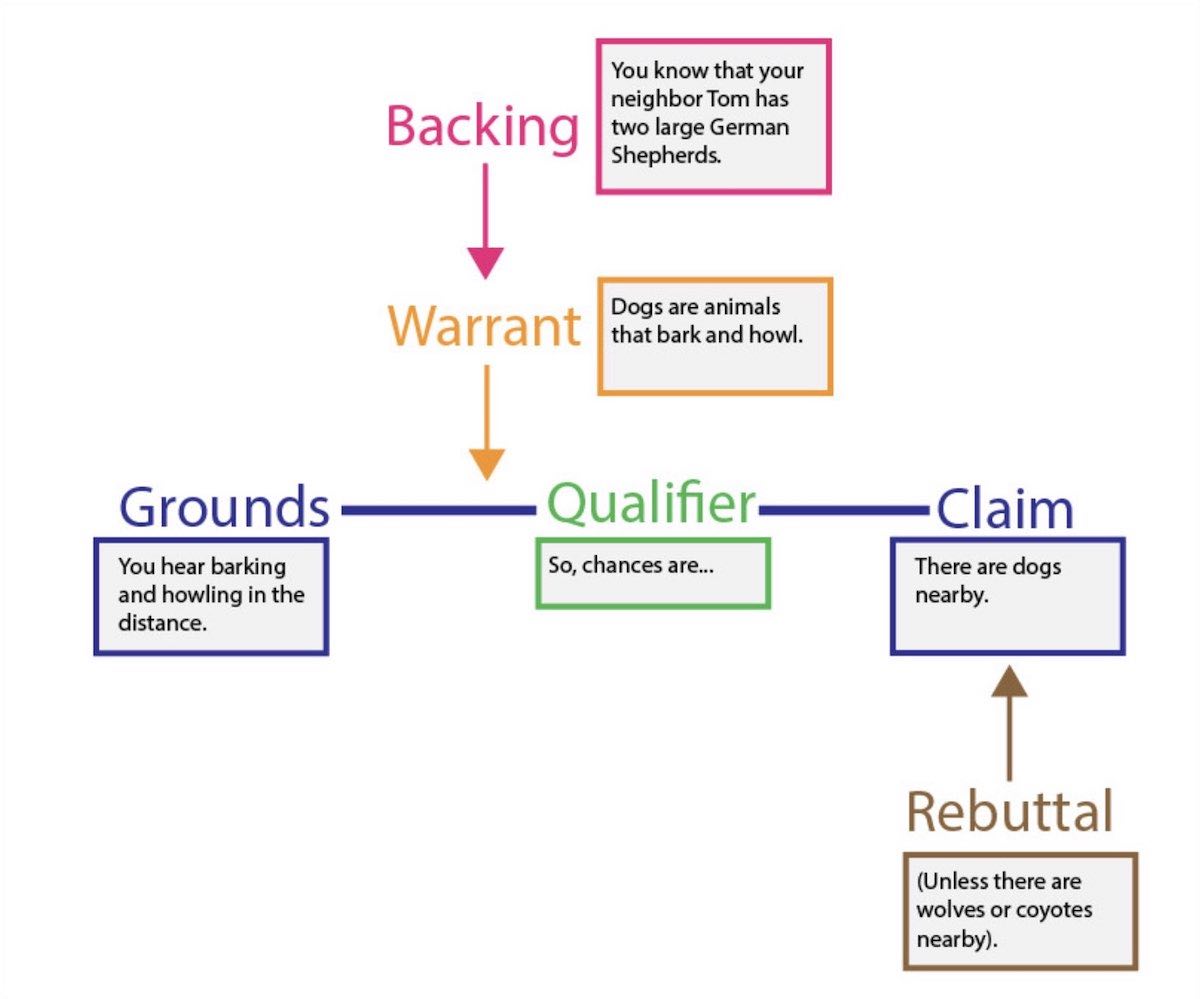

This method breaks down an argument into six parts: claim, grounds, warrant, backing, qualifier, and rebuttal.

I was intrigued by the potential of this method and decided to implement a proof of concept for an automated analysis using Mistral AI Instruct.

Image credit: Purdue Online Writing Lab

A brief explainer of the Toulmin argument

The Toulmin argument method breaks down an argumentation in 6 parts:

- Claim: This is the assertion that needs to be proven.

- Grounds: These are the evidences and facts that support the claim.

- Warrant: This is the assumption that links the grounds to the claim.

- Backing: This provides additional support to the warrant.

- Qualifier: This limits the scope of the claim.

- Rebuttal: This acknowledges that other views are possible.

Document and argumentation analysis at scale

The use of a large language model for automated analysis is fascinating due to its scalability. Imagine being able to extract claims and argumentation from all scientific publications.

This could significantly aid research by building traceability of arguments, identifying weak links, strengthening arguments with credible sources, and providing definitive answers in disputed fields.

However, the aspect that excites me the most is the potential to combat misinformation, especially in the scientific domain. With the rise of fake news and misinformation, having a tool that can automatically analyze and validate scientific claims could be a game-changer.

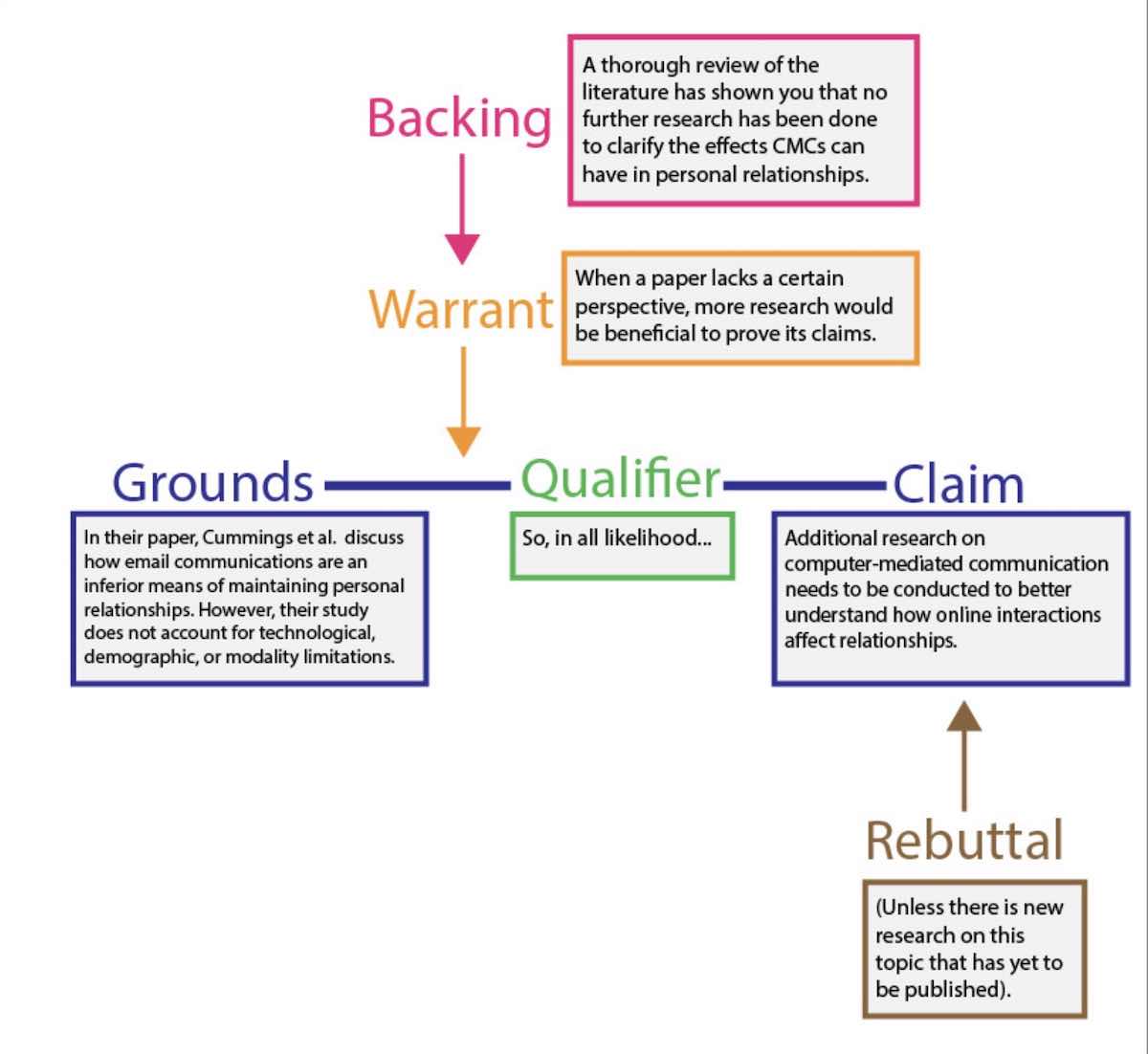

To illustrate how this works, let's take a sample paper from Purdue Online Writing Lab and analyze it using the Toulmin argument method.

The paper asserts that all forms of Computer-Mediated Communication (CMC) should be studied to fully understand how online communication affects relationships. Let's dive in.

Implementation of the Toulmmin argument analysis with Mistral LLM

This is a basic proof of concept but the interesting code is mainly written in plain english:

@dataclass

class ToulminArgumentAnalysis:

system_prompt = "You are a argumentation analysis expert"

prompt = """

Developed by philosopher Stephen E. Toulmin, the Toulmin

method is a style of argumentation that breaks arguments down into six

component parts:

- claim

- grounds

- warrant

- qualifier

- rebuttal

- backing.

In Toulmin’s method, every argument begins with three fundamental parts:

- the claim

- the grounds

- the warrant

A claim is the assertion that authors would like to prove to their audience.

It is, in other words, the main argument.

The grounds of an argument are the evidence and facts that help support the claim.

Finally, the warrant, which is either implied or stated explicitly,

is the assumption that links the grounds to the claim.

Backing refers to any additional support of the warrant.

In many cases, the warrant is implied, and therefore the backing provides

support for the warrant by giving a specific example that justifies the warrant.

The qualifier shows that a claim may not be true in all circumstances.

Words like “presumably,” “some,” and “many” help your audience understand

that you know there are instances where your claim may not be correct.

The rebuttal is an acknowledgement of another valid view of the situation.

Here is a text we'd like to analyze:

---

{content}

---

> Perform a Toulmin analysis on the previous text.

"""

claim: str

grounds: str

warrant: str

qualifier: str

rebuttal: str

backing: str

@classmethod

def analyze(cls, content):

with LLaMACPPAssistant(cls, model="mistral") as assistant:

analysis = assistant.process(content=content)

return analysis

if __name__ == "__main__":

analysis = ToulminArgumentAnalysis.analyze(sample_paper)

print(f"Claim: {analysis.claim}")

print(f"Grounds: {analysis.grounds}")

print(f"Warrant: {analysis.warrant}")

print(f"Qualifier: {analysis.qualifier}")

print(f"Rebuttal: {analysis.rebuttal}")

print(f"Backing: {analysis.backing}")The complete code is available in the github repository of the PyLLMCore library:

python3 examples/toulmin-model-argument-analysis.pyWhen we run the analysis on the sample paper, the results are very interesting:

**Claim**: All forms of CMC should be studied in order to fully understand how

online communication effects relationships

**Grounds**: Numerous studies have been conducted on various facets of Internet

relationships, focusing on the levels of intimacy, closeness, different

communication modalities, and the frequency of use of computer-mediated

communication (CMC). However, contradictory results are suggested within this

research mostly because only certain aspects of CMC are investigated.

**Warrant**: CMC is defined and used as ‘email’ in creating feelings of

closeness or intimacy. Other articles define CMC differently and, therefore,

offer different results.

**Qualifier**: The strength of the relationship was predicted best by FtF and

phone communication, as participants rated email as an inferior means of

maintaining personal relationships as compared to FtF and phone contacts.

**Rebuttal**: Other studies define CMC differently and, therefore,

offer different results.

**Backing**: Cummings et al.'s (2002) research in relation to three other

research articlesThis should be compared to the expected results:

Image credit: Purdue Online Writing Lab

This is just a glimpse of what automated argumentative analysis can do. The potential applications could change not just peer reviews, but also how we validate information.

Additional resources: Argdown

As an additional note, there is a project: Argdown that defines markdown extensions to formalize arguments.

An interesting project would be to fine tune a LLM model to be able to translate written content into argdown while performing argumentative analysis.